As you can see, it doesn’t always detect entities correctly when they’re a bit obscure like the ones in our text sentence. In the example below, it picks out Apple, Spacy, and NLP as ORG entities or organisations, Python as a GPE or geopolitical entity, and 5 as a CARDINAL or number. The ent style in Displacy labels any entities identified. The Displacy visualizer works inside a Jupyter notebook and takes the Spacy document and a style option and visualisation showing the tagged text. Stopwords rarely add much to models so often get stripped out to make models quicker and more effective.Īnother neat thing you can do with Spacy is use the additional Displacy module to visualise POS tagging. You can also see things like the shape of the word (how many characters it has and what case was used), and whether the word is a commonly used stop word, such as “is”, “with”, or “in”. They’re usually used in conjunction with token.tag_, which provides some deeper information. These include Parts of Speech or POS tags, stored in token.pos_, which contain a value such as NUM or NOUN to indicate what Spacy detected. The code below will extract some of the most widely used Spacy token attributes and put them in a Pandas dataframe. However, there are a wide range of other token attributes you can also extract with Spacy.

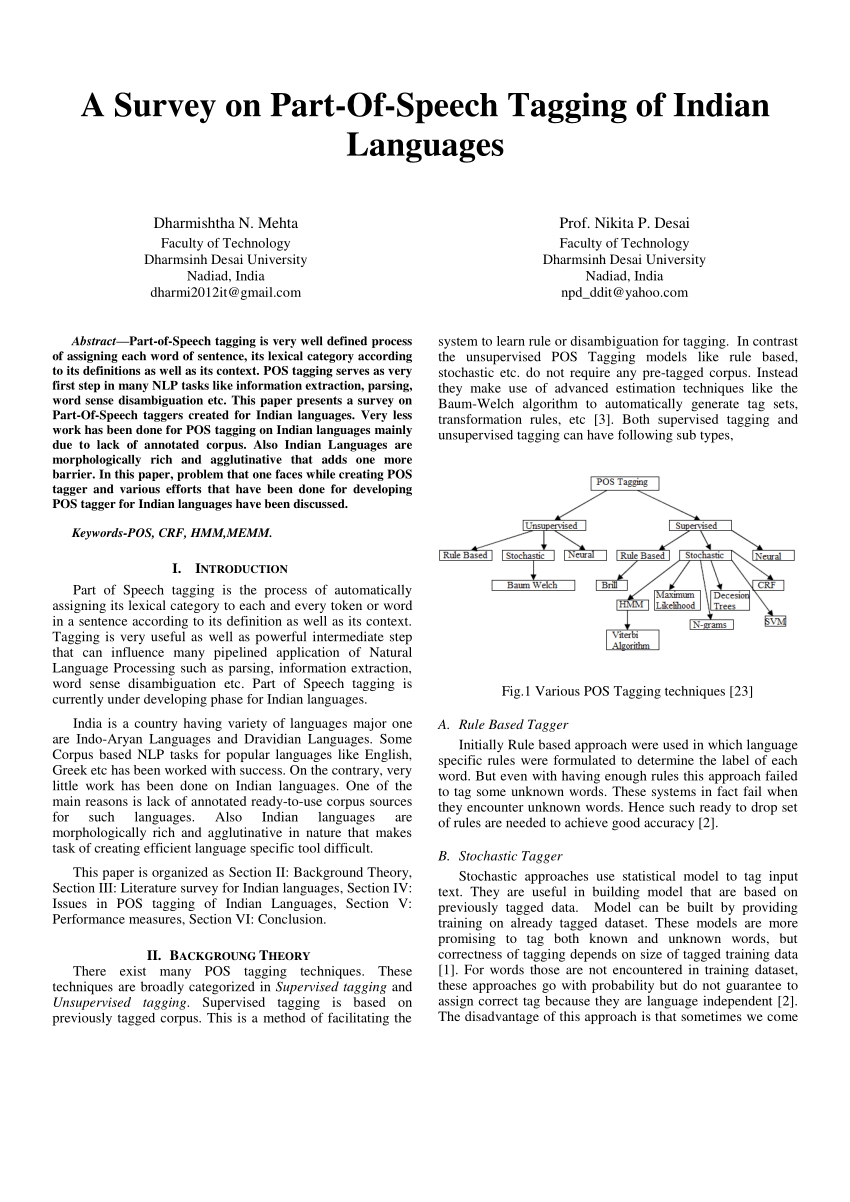

We’ve already seen that the token returned by Spacy contains the text, such as the word, number, or punctuation, within the token.text element. To install this you need to execute a command line command !python3 -m spacy download en_core_web_sm and wait a couple of minutes for everything to install.Īpple is seeking 5 new data scientists with skills in Python, Pandas, and Spacy. The most commonly used one is en_core_web_sm, but other more accurate models are available. Once this is installed, you’ll need to download a Spacy model. To get started, open a Jupyter notebook and install the Spacy package via the Pip Python package management system using !pip3 install spacy. We’ll tokenize the words in a sentence, tokenize the sentences in a paragraph, use lemmatization, detect stopwords, and extract parts of speech and their tags to a Pandas dataframe. In this simple tutorial, we’ll use Spacy for Parts of Speech tagging (or POS tagging), and NLP text preprocessing. It supports all common tasks out of the box, and is also highly extensible. Alongside the Natural Language Toolkit (NLTK), Spacy provides a huge range of functionality for a wide variety of NLP tasks. Take a look at the following example.Spacy is one of the most popular Python packages for Natural Language Processing. A NER model developed for one domain may not perform well for other domains. One problem with Named Entity Recognition is that they are domain-specific.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed